Driverless cars worse at detecting children and darker-skinned pedestrians say scientists::Researchers call for tighter regulations following major age and race-based discrepancies in AI autonomous systems.

Easy solution is to enforce a buddy system. For every black person walking alone at night must accompanied by a white person. /s

Loved that show.

But you have to hire equally so some may get darker skinned buddies. Who will then need buddies.

Within 3 months we will employee every human on the planet…

Money over people. Thats what it says right there on the lobby floor. It just looks more heroic in latin.

I think this is one of my favorite TV episodes ever. Better Off Ted deserved better.

I kind of felt they changed it up a tad in season 2 to make it have a slightly wider appeal, but it was too late.

The show?

Better Off Ted. Very funny show about a giant company doing ridiculous things

LiDAR doesn’t see skin color or age. Radar doesn’t either. Infra-red doesn’t either.

LiDAR, radar and infra-red may still perform worse on children due to children being smaller and therefore there would be fewer contact points from the LiDAR reflection.

I work in a self driving R&D lab.

How about skin color? Does darker skin reflect LiDAR/infrared the same way as light skin?

Infrared cameras don’t depend on you reflecting infrared. You’re emitting it.

All matter emits light; the frequencies that it’s brightest in depend on the matter’s temperature. Objects around human body temperature mostly glow in the long-wave infrared. It doesn’t matter what your skin color is; “color” is a different chunk of spectrum.

Sorry, I misunderstood. I know about all that, I just thought it meant active infrared lighted cameras. So basically, an IR light on the car, illuminating the road ahead, and then just using a near-IR camera like a regular optical camera.

I didn’t think it meant a far-IR camera passively filming black body radiation, because I thought the resolution (both spacially and temporally) of these cameras is usually really low. Didn’t think they were fast and high-res enough to be used on cars.

Would you be willing to share some neato stuff about your job with us?

That’s a fair observation! LiDAR, radar, and infra-red systems might not directly detect skin color or age, but the point being made in the article is that there are challenges when it comes to accurately detecting darker-skinned pedestrians and children. It seems that the bias could stem from the data used to train these AI systems, which may not have enough diverse representation.

The main issue, as someone else pointed out as well, is in image detection systems only, which is what this article is primarily discussing. Lidar does have its own drawbacks, however. I wouldn’t be surprised if those systems would still not detect children as reliably. Skin color wouldn’t definitely be a consideration for it, though, as that’s not really how that tech works.

Ya hear that Elno?

Do lidar and infrared work equally on white and black people? Both are still optical systems, and I don’t know how well black people reflect infrared light.

Seriously no pun intended: infrared cameras see black-body radiation, which depends on the temperature of the object being imaged, not its surface chemistry. As long as a person has a live human body temperature, they’re glowing with plenty of long-wave IR to see them, regardless of their skin melanin content.

I thought we were talking about near-IR cameras using active illumination (so, an IR spotlight).

I didn’t think low-wave IR cameras are fast enough and have enough resolution to be used on a car. Every one I have seen so far gives you a low-res low-FPS image, because there just isn’t enough long-wave IR falling into the lens.

You know, on any camera you have to balance exposure time and noise/resolution with the amount of light getting into the lens. If the amount of light decreases, you have to either take longer exposures (->lower FPS), decrease the resolution (so that more light falls onto one pixel), or increase the ISO, thereby increasing noise. Or increase the lens diameter, but that’s not always an option.

And since long-wave IR has super little “light”, I didn’t think the result would work for cars, which do need a decent amount of resolution, high FPS and low noise so that their image recognition algos aren’t confused.

Isn’t that true for humans as well? I know I find it harder to see children due to the small size and dark skinned people at night due to, you know, low contrast (especially if they are wearing dark clothes).

Human vision be racist and ageist

Ps: but yes, please do improve the algorithms

Part of the children problem is distinguishing between ‘small’ and ‘far away’. Humans seem reasonably good at it, but from what I’ve seen AIs aren’t there yet.

Yeah This probably accounts for 90% of the issue.

Maybe if we just, I dunno, funded more mass transit and made it more accessible? Hell, trains are way better at being automated than any single car.

That works in a city, it’s not viable to have mass transit in every place, you still need cars for total areas

Those areas don’t really have silly things like pedestrains, though. They’re also far too small of a market to design self-driving cars for.

There are areas that are not rural that need cars, because the public transit sucks.

There’s no fast way to go to San Jose where my friend lives from Sunnyvale where I’m staying.

Walking is 3 hours, but walking to a train stop and then walking from the train stop would be about 2 hours. Buses don’t connect well either, so it’s still like 2 hours after you do all the transfers.

Or I could pay $17 for a Lyft and make it there in 20 minutes.

Yes, public transit should be better in the suburbs, but you’re talking about a very large SF bay area that needs better connections from everywhere to everywhere

The SF Bay area needs more funding for transportation, period. The roads seem to always be under construction and the traffic lights just spontaneously stop working sometimes.

Yes, but also improve kid and dark skin people detection tools, they don’t work just for driving cars. Efficient, fast and accurate people detection and tracking tools can be used in other myriad of stuff.

Imagine a system that tracks the amount of people in different sections of the store, a system that checks the amount of people going in and out of stores to control how many are inside… There’s a lot of tools that already do this, but and they works somewhat reliably, but they can be improved, and the models being developed for cars will then be reused. I+D is a good thing.

An AI that can follow black people around a store? You might be into something.

The trains in California are trash. I’d love to see good ones, but this isn’t even a thought in the heads of those who run things.

Dreaming is nice… But reality sucks, and we need to deal with it. Self driving cars are a wonderful answer, but Tesla, is fucking it up for everyone.

Strongly disagree. Trains are nice everywhere in the world. There’s no reason they can’t be nice in the US. Cars are trash. Strip malls are trash. Giant parking lots are trash. The sky high cost of cars is trash. The environmental impact of cars is trash. The danger of cars is trash. Car centric urban planning is trash.

Self-driving cars are safer… than the most dangerous thing ever. But because cars are inherently so dangerous, they are still more dangerous than just about any other mode of transportation.

Dreaming is nice, but that’s all self-driving cars are right now. I don’t see why we don’t have better dreams.

A fellow fuckcars fan. Also important to remember that the US has been systematically lobbied to make public transport, trains, etc way worse.

Chiming in from Seattle, we just built light rail up here and it’s just awful how slow they made it. It has its own track… It’s insane that it’s slower than driving in traffic. But they wanted to serve every neighborhood possible instead of realizing trains are not a last mile solution unless you build cities specifically around it.

Reporting from Vancouver, Canada. Our skytrain system is very fast and reliable. Comes every 1-3 minutes. I’ve never heard any complaints.

I looked this up and I was surprised to learn that the skytrain speed is 25-40km/h (20-25mph) while Seattle’s Link transit goes 35-55mph. That sounds very fast for a city transit system! Are you sure it’s slower than a car in traffic, with all the stop lights and rush hour? I’m skeptical but I’ve never used Link.

Google maps right now puts the light rail at one hour on light rail for what is a 23 minute drive. Last time I rode it, it took over an hour and a half for that same trip, and that’s excluding the time waiting for the train.

Trains in California suck because of government dysfunction across all levels. At the municipal level, you can’t build shit because every city is actually an agglomeration of hundreds of tiny municipalities that all squabble with each other. At the regional level, you get NIMBYism that doesn’t want silly things like trains knocking down property values… And these people have a voice, because democracy I guess (despite there being a far larger group of people that would love to have trains). At the state level, you have complete funding mismanagement and project management malfeasance that makes projects both incredibly expensive and developed with no forethought whatsoever (Caltrain has how many at-grade crossings, again?).

This isn’t a train problem, it’s a problem with your piss-poor government. At least crime is down, right?

DRIVERLESS CARS: We killed them. We killed them all. They’re dead, every single one of them. And not just the pedestmen, but the pedestwomen and the pedestchildren, too. We slaughtered them like animals. We hate them!

I’m sick of the implication that computer programmers are intentionally or unintentionally adding racial bias to AI systems. As if a massive percentage of software developers in NA aren’t people of color. When can we have the discussion where we talk about how photosensitive technology and contrast ratio works?

There’s still a huge racial disparity in tech work forces. For one example, at Google according to their diversity report (page 66), their tech workforce is 4% Black versus 43% White and 50% Asian. Over the past 9 years (since 2014), that’s an increase from 1.5% to 4% for Black tech workers at Google.

There’s also plenty of news and research illuminating bias in trained models, from commercial facial recognition sets trained with >80% White faces to Timnit Gebru being fired from Google’s AI Ethics group for insisting on admitting bias and many more.

I also think it overlooks serious aspects of racial bias to say it’s hard. Certainly, photographic representation of a Black face is going to provide less contrast within the face than for lighter skin. But that’s also ingrained bias. The thing is people (including software engineers) solve tough problems constantly, have to choose which details to focus on, rely on our experiences, and our experience is centered around outselves. Of course racist outcomes and stereotypes are natural, but we can identify the likely harmful outcomes and work to counter them.

Wouldn’t good driverless cars use radars or lidars or whatever? Seems like the biggest issue here is that darker skin tones are harder for cameras to see

Tesla removed the LiDAR from their cars, a step backwards if you ask me.

Edit: Sorry RADAR not LiDAR.

They removed the radars, they’ve never used LiDAR as Elon considered it “a fool’s errand”, which translates to “too expensive to put in my penny pinched economy cars”. Also worth noting that they took the radars out purely to keep production and the stock price up, despite them knowing well in advance performance was going to take a massive hit without it. They just don’t give a shit, and a few pedestrian deaths are 100% worth it to Elon with all the money he made from the insane value spike of the stock during COVID. They were the one automaker who maintained production because they just randomly swapped in whatever random parts they could find, instead of anything properly tested or validated, rather than suck it up for a bad quarter or two like everyone else.

Seems like Tesla is really not going to be the market leader on this. IDK if anytime else caught those videos by the self driving tech expert going through all the ways Tesla is bullshitting about it.

Hey, they’ll have full self driving tech next year!

Source: Elon Musk, every year, for like the last ten years.

Elon’s bullshit aside, they are leading self driving and the company with most data to train machine learning models on, by FAR.

Their self driving challenges are not a result of data acquisition, adding LIDAR to the mix would not be helpful. Pedestrian detection is not the big unsolved problem this article makes it sound like.

If the latest FSD update is anything to go buy, they won’t be a leader for much longer if every release makes it worse.

I think many driverless car companies insist on only using cameras. I guess lidars/radars are expensive.

They’re basically the only one. Even MobilEye, who is objectively the best in the ADAS/AV space for computer vision, uses other sensors in their fleet. They have demonstrated camera only autonomy, but realize it’s not worth the $1000 in sensors to risk killing people.

Even Comma.AI, which is vision-only internally, still implicitly relies on the cars built in radar for collision detection and blind spot monitoring. It’s just Tesla.

To be fair, that’s because most cars aren’t equipped with cameras for blind spot detection.

Thats because cameras aren’t good for blind spot detection. Moreover, even for cars that have cameras on the side, the Comma doesn’t use them. AFAIK, in my car with 360 cameras, the OEM system doesn’t use the cameras either for blind spot.

I hate all this bias bullshit because it makes the problem bigger than it actually is and passes the wrong idea to the general public.

A pedestrian detection system shouldn’t have as its goal to detect skin tones and different pedestrian sizes equally. There’s no benefit in that. It should do the best it can to reduce the false negative rates of pedestrian detection regardless, and hopefully do better than human drivers in the majority of scenarios. The error rates will be different due to the very nature of the task, and that’s ok.

This is what actually happens during research for the most part, but the media loves to stir some polarization and the public gives their clicks. Pushing for a “reduced bias model” is actually detrimental to the overall performance, because it incentivizes development of models that perform worse in scenarios they could have an edge just to serve an artificial demand for reduced bias.

I think you’re misunderstanding what the article is saying.

You’re correct that it isn’t the job of a system to detect someone’s skin color, and judge those people by it.

But the fact that AVs detect dark skinned people and short people at a lower effectiveness is a reflection of the lack of diversity in the tech staff designing and testing these systems as a whole.

They staff are designing the AVs to safely navigate in a world of people like them, but when the staff are overwhelmingly male, light skinned, young and single, and urban, and in the United States, a lot of considerations don’t even cross their minds.

Will the AVs recognize female pedestrians?

Do the sensors sense light spectrum wide enough to detect dark skinned people?

Will the AVs recognize someone with a walker or in a wheelchair, or some other mobility device?

Toddlers are small and unpredictable.

Bicyclists can fall over at any moment.

Are all these AVs being tested in cities being exposed to all the animals they might encounter in rural areas like sheep, llamas, otters, alligators and other animals who might be in the road?

How well will AVs tested in urban areas fare on twisty mountain roads that suddenly change from multi lane asphalt to narrow twisty dirt roads?

Will they recognize tractors and other farm or industrial vehicles on the road?

Will they recognize something you only encounter in a foreign country like an elephant or an orangutan or a rickshaw? Or what’s it going to do if it comes across that tomato festival in Spain?

Engineering isn’t magical: It’s the result of centuries of experimentation and recorded knowledge of what works and doesn’t work.

Releasing AVs on the entire world without testing them on every little thing they might encounter is just asking for trouble.

What’s required for safe driving without human intelligence is more mind boggling the more you think about it.

But the fact that AVs detect dark skinned people and short people at a lower effectiveness is a reflection of the lack of diversity in the tech staff designing and testing these systems as a whole.

No, it isn’t. Its a product of the fact that dark people are darker and children are smaller. Human drivers have a harder time seeing these individuals too. They literally send less data to the camera sensor. This is why people wear reflective vests for safety at night, and ninjas dress in black.

This is true but tesla and others could compensate for this by spending more time and money training on those form factors, something humans can’t really do. It’s an opportunity for them to prove the superhuman capabilities of their systems.

That doesn’t make it better.

It doesn’t matter why they are bad at detecting X, it should be improved regardless.

Also maybe Lidarr would be a better idea.

They literally send less data to the camera sensor.

So maybe let’s not limit ourselves to using hardware which cannot easily differentiate when there is other hardware, or combinations of hardware, which can do a better job at it?

Humans can’t really get better eyes, but we can use more appropriate hardware in machines to accomplish the task.

That is true. I almost hit a dark guy, wearing black, who was crossing a street at night with no streetlight as I turned into it. Almost gave me a heart attack. It is bad enough almost getting hit, as a white guy, when I cross a street with a streetlight.

These are important questions, but addressing them for each model built independently and optimizing for a low “racial bias” is the wrong approach.

In academia we have reference datasets that serve as standard benchmarks for data driven prediction models like pedestrian detection. The numbers obtained on these datasets are usually the referentials used when comparing different models. By building comprehensive datasets we get models that work well across a multitude of scenarios.

Those are all good questions, but need to be addressed when building such datasets. And whether model M performs X% better to detect people of that skin color is not relevant, as long as the error rate of any skin color is not out of an acceptable rate.

The media has become ridiculously racist, they go out of their way to make every incident appear to be racial now

The study only used images and the image recognition system, so this will only be accurate for self driving systems that operate purely on image recognition. The only one that does that currently is Tesla AFAIK.

This has been the case with pretty much every single piece of computer-vision software to ever exist…

Darker individuals blend into dark backgrounds better than lighter skinned individuals. Dark backgrounds are more common that light ones, ie; the absence of sufficient light is more common than 24/7 well-lit environments.

Obviously computer vision will struggle more with darker individuals.

Visible light is racist.

deleted by creator

-

No it’s because they train AI with pictures of white adults.

-

It literally wouldn’t matter for lidar, but Tesla uses visual cameras to save money and that weighs down everyone else’s metrics.

Lumping lidar cars with Tesla makes no sense

-

If the computer vision model can’t detect edges around a human-shaped object, that’s usually a dataset issue or a sensor (data collection) issue… And it sure as hell isn’t a sensor issue because humans do the task just fine.

Do they? People driving at night quite often have a hard time seeing pedestrians wearing dark colors.

And it sure as hell isn’t a sensor issue because humans do the task just fine.

Sounds like you have never reviewed dash camera video or low light photography.

Which cars are equipped with human eyes for sensors?

Worse than humans?!

I find that very hard to believe.

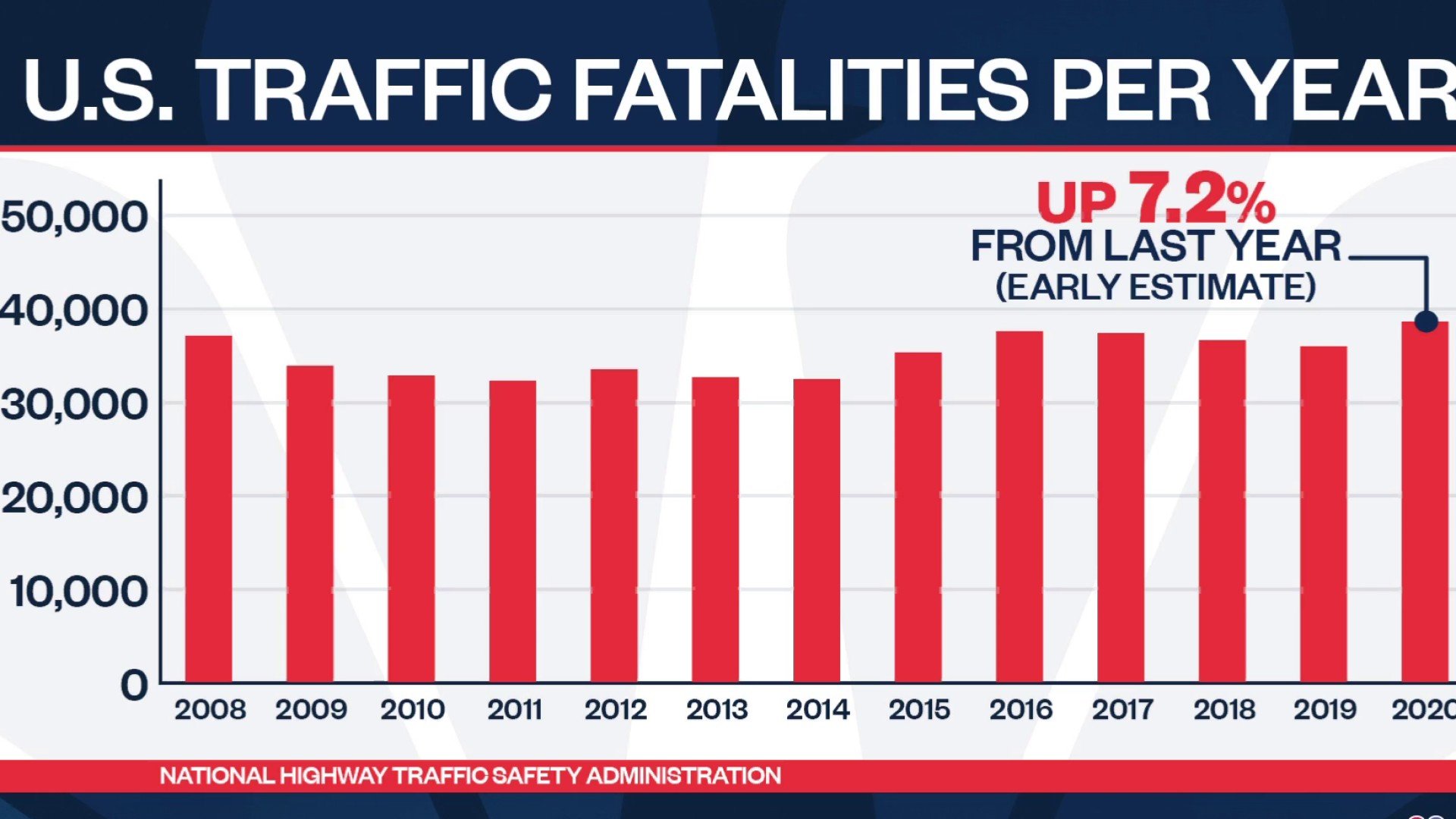

We consider it the cost of doing business, but self-driving cars have an obscenely low bar to surpass us in terms of safety. The biggest hurdle it has to climb is accounting for irrational human drivers and other irrational humans diving into traffic that even the rare decent human driver can’t always account for.

American human drivers kill more people than 10 9/11s worth of people every year. Id rather modernizing and automating our roadways would be a moonshot national endeavor, but we don’t do that here anymore, so we complain when the incompetent, narcissistic asshole who claimed the project for private profit turned out to be an incompetent, narcissistic asshole.

The tech is inevitable, there are no physics or computational power limitations standing in our way to achieve it, we just lack the will to be a society (that means funding stuff together through taxation) and do it.

Let’s just trust another billionaire do it for us and act in the best interests of society though, that’s been working just gangbusters, hasn’t it?

Not necessarily worse than humans, no, just worse than it can detect light skinned and tall people.

Your proving their point. That’s Tesla, the one run by an edgy, narcissistic, billionaire asshole, not the companies with better tech under (and above, in this case) the hood.

All I am saying is that the article doesn’t attempt to make any comparison between human’s and AI’s ability to detect dark skinned people or children… the “worse” mentioned in the poorly worded (misleading) headline was comparing the detection rates of AI only.

2020 was lockdown year, how on earth have accidents increased in the US?

Ah yes, because all humans are equally bad drivers.

A self-driving car shouldn’t compete with the average human because the average human is a fucking idiot. A self-driving car should drive better than a good driver, or else you’re just putting more idiots on the road.

Replacing bad drivers with ok drivers is a net win. Let’s not leave perfection be the enemy of progress.

Any black people or children in your ‘study’?

Self driving cars are republicans?

What? No. They’d need to recognise them better - otherwise how can they swerve to make sure they hit them?

Built by them, inherited their biases.

They just want to do abortions on the road. Just a few years after birth.

deleted by creator

Weird question, but why does a car need to know if it’s a person or not? Like regardless of if it’s a person or a car or a pole, maybe don’t drive into it?

Is it about predicting whether it’s going to move into your path? Well can’t you just just LIDAR to detect an object moving and predict the path, why does it matter if it’s a person?

Is it about trolley probleming situations so it picks a pole instead of a person if it can’t avoid a crash?

Im guessing it can’t detect them as objects at all, not that it can’t classify them as humans.

That seems like the car is relying way too much on video to detect surroundings…

Bingo.

Sooo… like the Tesla?

Haha yes, but from the article I got the impression it was across all tested brands. Tesla is being called out at the moment for not having the appropriate hardware that other brands are using (e.g. LIDAR).

Conant and Ashby’s good regulator theorem in cybernetics says, “Every good regulator of a system must be a model of that system.”

The AI needs an accurate model of a human to predict how humans move. Predicting the path of a human is different than predicting the path of other objects. Humans can stand totally motionless, pivot, run across the street at a red light, suddenly stop, fall over from a heart attack, be curled up or splayed out drunk, slip backwards on some ice, etc. And it would be computationally costly, inaccurate, and pointless to model non-humans in these ways.

I also think trolley problem considerations come into play, but more like normativity in general. The consequences of driving quickly amongst humans is higher than amongst human height trees. I don’t mind if a car drives at a normal speed on a tree lined street, but it should slow down on a street lined with playing children who could jump out at anytime.

Thanks, you make some good points. (safe) human drivers drive differently in situations with a lot of people in them, and we need to replicate that in self-driving cars.

Anyone who quotes Ashby et al gets an upvote from me! I’m always so excited to see cybernetic thinking in the wild.

Cameras and image recognition are cheaper than LIDAR/RADAR, so Tesla uses it exclusively.

They need to safely ignore shadows, oil stains on the road, just because there’s contrast on an image doesn’t mean it’s an object.

Sure but why on earth are we relying on cameras to drive cars? Many modern cars have radar, which is far more reliable.

Natural vision is awesome, it works for billions of humans. We just have nothing close to what the human eyes and brain offers in terms of tech in that spectrum.

I think it needs to be a combination of sensors since radar sucks in the rain/snow/fog.

I’d assume that’s either due to bias in the training set, or poor design choices. The former is already a big problem in facial recognition, and can’t really be fixed unless we update datasets. With the latter, this could be using things like visible light for classification, where the contrast between target and background won’t necessarily be the same for all skin tones and times os day. Cars aren’t limited by DNA to only grow a specific type of eye, and you can still create training data from things like infrared or LIDAR. In either case though, it goes to show how important it is to test for bias in datasets and deal with it before actually deploying anything…

In this case it’s likely partly a signal to noise problem that can’t be mitigated easily. Both children and dark skinned people produce less signal to a camera because they reflect less light. children because they’re smaller, and dark skinned people because their skin tones are darker. This will cause issues in the stereo vision algorithms that are finding objects and getting distance to them. Lidar would solve the issue, but companies don’t want to use it because lidars with a fast enough update rate and high enough resolution for safe highway driving are prohibitively expensive for a passenger vehicle (60k+ for just the sensor)

Darker toned people are harder to detect because they reflect less light. The tiny cheap sensors on cameras do not have enough aperture for lower light detections. It’s not training that’s the problem it’s hardware.

Im not expert, but perhaps thermal camera + lidar sensor could help.

It’s amazing Elon hasn’t figured this out. Then again, Steve Jobs said no iPhone would ever have an OLED screen.

We should just assume CEO’s are stupid at this point. Seriously. It’s a very common trend we all keep seeing. If they prove otherwise, then that’s great! But let’s start them at “dumbass” and move forward from there.